The failure was invisible. Nothing broke. No one complained. The numbers looked fine, deadlines were met, and decisions were made on time in clear, professional language. From the outside, everything seemed solid. That is what made it dangerous, especially in a year when conversations around AI books 2026 were filled with confidence about smarter systems and better decisions.

A few weeks later, I reread a short internal note I had approved with help from an AI system. Something felt strange. The logic was clear, the tone was right, and the decision made sense. But I could not remember why I had agreed to it. I could explain the reasons and rebuild the argument, yet I could not recall what felt risky at the time or what I had struggled with. The tension of that moment was gone, replaced by a neat explanation that did not fully feel like mine. It was not wrong, which made it even harder to notice.

By then, I had read a lot about AI. Articles, research papers, and strong opinions about the future. I could discuss both sides of most AI topics with ease. But when it came time to decide what not to delegate and where to slow down instead of letting the system move faster, I noticed something. I kept defaulting. Not because I was lazy, but because something quieter was happening. My judgment was still there, yet it was getting thinner.

This article is not for beginners. If you are still amazed by what AI can do, this may feel too cautious. If you are looking for tools, tricks, or simple frameworks, you will not find them here. This is for people who already use AI in real work and now feel that something important may be slipping away, even though nothing seems obviously wrong.

The books below did not teach me how AI works. They made me uncomfortable, and that is why they mattered. I am limiting this to five because beyond that, reading can become an escape, another way to avoid the fact that no system will take full responsibility for your decisions. Popularity does not matter here, and neither does how new a book is. A book belongs on this list only if it stays with you and its ideas return later, usually when you do not expect them.

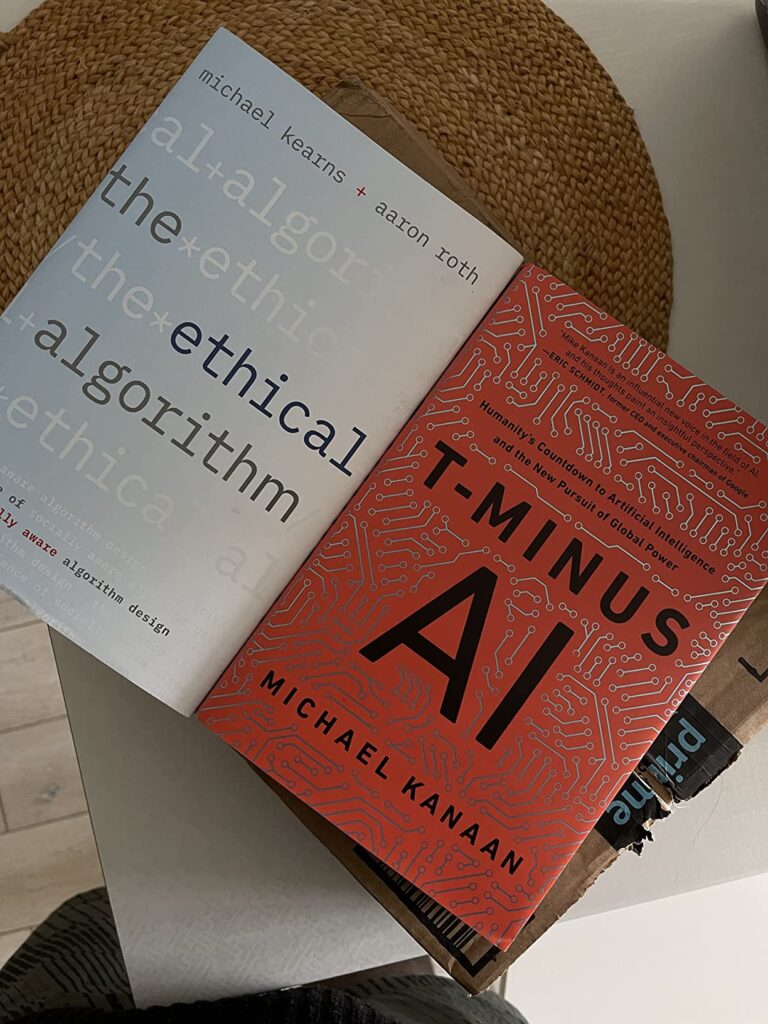

1. The Ethical Algorithm

By Michael Kearns and Aaron Roth, computer scientists working at the intersection of algorithms, incentives, and public consequences.

Most books about AI ethics try to sound reassuring. This one doesn’t.

It starts from a quieter admission: that many problems cannot be solved cleanly, only bounded. That fairness, privacy, accuracy, and efficiency cannot all be maximized at once and pretending otherwise is already a choice.

What stayed with me wasn’t a principle, but a constraint. The realization that every automated decision embeds trade-offs, whether we name them or not. That refusing to specify limits doesn’t remove responsibility it just hides it.

After this book, I noticed myself asking different questions before approving AI-assisted systems. Not “does it work?” but “what is this allowed to break?” And who absorbs that breakage?

The book doesn’t moralize. It narrows your options until avoidance feels dishonest.

That narrowing doesn’t fade. It makes optimization feel heavier than it used to.

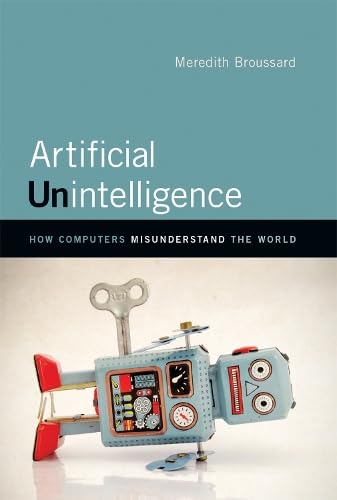

2. Artificial Unintelligence

By Meredith Broussard, a data journalist and former software developer.

This book is often described as critical, even pessimistic. That framing misses the point.

The real argument here isn’t that AI fails. It’s that we keep using it to end conversations that should stay open.

Broussard spends time on systems that technically work but fail to respect the shape of the problems they’re applied to. Context-heavy domains. Human messiness. Places where disagreement isn’t inefficiency, it’s information.

What stayed with me was how often AI is introduced not because it improves outcomes, but because it removes friction. Once something is automated, dissent starts sounding like resistance to progress.

After this book, I caught myself noticing when I reached for AI to simplify decisions I didn’t want to hold anymore. When “objectivity” was doing emotional labor for me.

That realization wasn’t flattering. But it was useful.

The book doesn’t ask you to abandon AI. It asks you to notice what kind of human work disappears first and whether that disappearance is always a win.

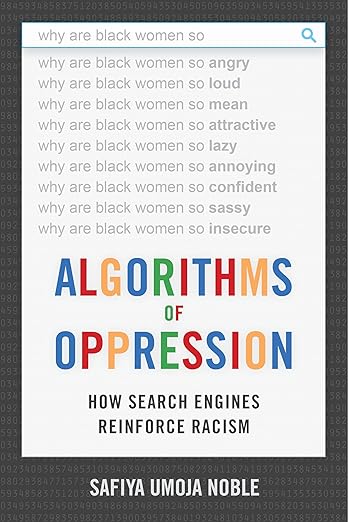

3. Algorithms of Oppression

By Safiya Umoja Noble, a scholar studying search, power, and invisibility in algorithmic systems.

This is not a book about broken algorithms. It’s a book about working ones.

Noble shows how ranking systems, search engines, and recommendation logic quietly reproduce social hierarchies not through malicious intent, but through neglect. Through what is considered neutral. Through what goes unquestioned.

What lingers isn’t outrage. It’s discomfort.

After reading, I became more alert to what systems consistently failed to surface. Which voices never appeared unless explicitly searched for. Which absences felt normal because they were stable.

In one case, I realized we had trusted a ranking output because it looked calm and complete. Only later did it become obvious how much it had filtered out not by error, but by design.

This book sharpens your sense for quiet erasure. Not dramatic injustice. Structural invisibility.

4. Human Compatible

By Stuart Russell, one of the foundational figures in modern AI research.

This book doesn’t rush. That alone makes it rare.

Its central idea that systems should remain uncertain about human preferences sounds abstract until you sit with its implications. Uncertainty isn’t a flaw to eliminate. It’s a safeguard.

After reading, I became more cautious about closing loops too quickly. About systems that claim confidence prematurely. About delegating decisions that still felt morally right.

In practice, this meant tolerating ambiguity longer than was comfortable. Leaving decisions partially unresolved. Resisting the urge to optimize everything into clarity.

The book doesn’t give you better answers. It makes you suspicious of answers that arrive too easily.

That suspicion has costs. It slows things down. It makes justification harder. But it also keeps responsibility closer.

5. The Eye of the Master

By Matteo Pasquinelli, a theorist examining AI through labor, measurement, and control.

This book doesn’t read like most AI writing. That’s its value.

Pasquinelli places AI not in the future, but in a long history of measurement system tools built to evaluate, extract, and optimize human labor. Intelligence here isn’t mystical. It’s administrative.

What stayed with me was the shift in framing. AI as a way of seeing, not thinking. As an extension of accounting logic, not cognition.

After this book, automation stopped feeling neutral. I began noticing how often “intelligence” was really about sorting, ranking, and enforcing norms at scale.

It doesn’t make AI seem dangerous. It makes it feel revealing.

Why only five

Because past this point, accumulation becomes avoidance.

More books won’t restore judgment if the problem is over-delegation. If the issue is that decisions no longer feel owned.

Here’s what this kind of failure demands. Not advice. A requirement:

You must reclaim decision latency.

Not speed. Not accuracy. Latency.

The pause where automation hasn’t closed the loop yet. Where responsibility still has weight. Where you feel the discomfort of choosing instead of accepting.

Most AI books optimize for confidence. These don’t. They quietly undermine it—just enough to return friction to places that had become too smooth.

I didn’t feel inspired after reading these books. I felt narrower. Less impressed. Slower to approve things I couldn’t personally stand behind.

That cost me time. In one case, it cost me convenience. Maybe even a little reputation for being “decisive.”

I’m not sure it paid off.

There’s a copy of one of these books still on my desk. Face down. The cover’s a little worn now. It keeps getting pushed aside, then noticed again. I still haven’t picked it up.

Related Posts 📌

Top 7 AI Books to Read in 2026 That Truly Shape How You Think, Build & Decide